From Microfiche to Machine Learning

It helps to remember that AI isn’t the first shift we’ve lived through—it’s just the latest.

I grew up Gen Y, which is a term you don’t hear much anymore. I usually get lumped in with younger Gen Xers or older Millennials, and I always felt like I shared something with both groups. I grew up sandwiched between the generation that invented the internet and the generation that never knew life without it. It gave me a unique perspective on how to approach technology that I think has served me well.

In middle school, I learned the Dewey Decimal System, how to locate a book, how to load microfiche, and how to scroll through it to find old newspaper articles. Research was physical. I wrote things down. I made lists that couldn’t be easily sorted. And if I messed up, I had to start over. But by high school, I had my first introduction to Boolean search terms and the early databases that came with them. Suddenly, the haystack was much larger, and it required new skills—clicking AND, OR, and NOT on and off, sliding date ranges forward and back, and eventually learning that the crown-jewel limiters were “peer-reviewed journal” and “full text only.”

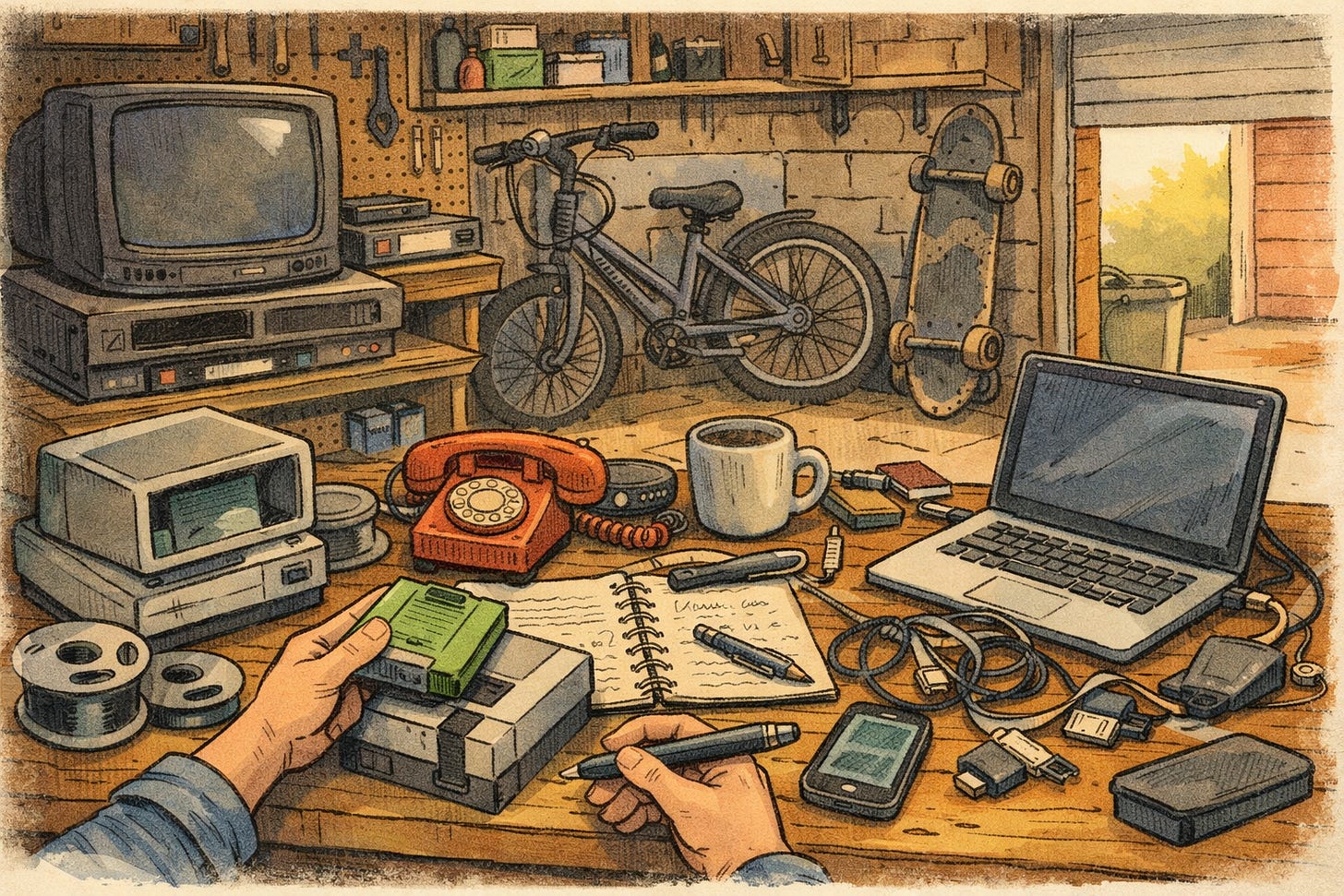

I was part of an in-between generation. We rode our bikes until the streetlights came on. We grew into wearing beepers, but we used them from rotary phones, the ones with the super-long cords so you could walk around the house and get a little privacy if someone was in the kitchen.

I remember those old Apple machines with the green screens and a lawnmower game, which eventually gave way to early DOS and The Oregon Trail. We didn’t learn to play by watching Twitch clips or tutorial videos, or even by reading the little manuals that came with Nintendo cartridges. You turned the game on and and started pressing buttons until you figured it out.

That habit, learning by poking at things, by pressing buttons and seeing what happens, never really left me.

Which is good because the pace of change only increased. And when your whole history is one rapid change after another, you develop a tolerance for mess. You stop expecting things to be intuitive or perfectly explained. You learn by poking at them, by trying things, by seeing what happens. You play. And that habit, learning through exploration instead of waiting for instructions, has shaped how I’ve approached every new technology since.

Years later, when I started thinking more intentionally about teaching with technology, I had the good fortune to meet Alan Levine. He’s a prolific blogger and someone with a distinctly playful energy when it comes to technology. At the time, I was trying to help faculty work through what I’ve come to think of as Ostrich Syndrome.

As part of a faculty training on teaching online, I asked Alan to record a video conversation with me to help get the faculty more excited, and I thought his energy was infectious. We weren’t trying to solve anything so much as we were just thinking together, talking about what makes people anxious about technology and why playfulness is a better response than frustration.

One idea from that conversation really stuck with me. Alan pointed out that faculty often expect technology to work perfectly every single time, even though nothing else in teaching works that way. You walk into a classroom with a lesson plan, and it never goes 100% according to plan. Not once. No teacher has ever had the perfect lesson unfold exactly as imagined, not in the whole history of forever. You adapt it to the class in front of you, to the students who show up, the questions they bring, and the confusions they have. And you don’t do that once in a while—you adapt every single time, even in the most traditional classroom.

But for some reason, when it comes to technology, some folks expect it to behave perfectly, as if that will make the experience more stable instead of less. And when it doesn’t, and it never does, the frustration we see from faculty can be immense.

Alan’s advice was simple but profound: if you expect technology to misbehave, to go a little awry, you can respond with playfulness instead of anger.

That idea landed hard for me. The freedom to futz and play around with all of this seemed liberatory after I had learned to put on the airs of the academy. It gave me the courage to try, fail, fix, adjust, and try it all over again. It is a mindset that has served me well.

Talking with Alan helped me realize that it’s okay to explore in front of students. Some of the best classroom experiences I’ve ever had came from figuring something out together.

Teaching has never been a clean process. Things break. Plans fall apart. You adjust. That’s why his idea of futzing has always resonated with me. It makes sense because it acknowledges how learning actually happens. Failure isn’t the end of learning; it’s the first attempt at it.

But it’s also about being human with your students. About letting go of the idea that teaching happens from some perfectionist ivory tower. Yes, you’re the expert in your field - of course you are. But that doesn’t mean everything unfolds perfectly around you. You’re not standing calmly in the eye of the storm, dispensing flawless knowledge. What students are really learning from is the experience of interacting with someone who has expertise, the back-and-forth, the friction, the process.

I’ve always said you can’t just open up someone’s head and pour what you know about poetry into it. Learning happens in the interaction. It happens in the struggle. It happens when you engage with the material, not just when you receive it.

And once you see teaching that way, moments like this, when a new technology shows up and rubs everything just a little bit differently, stop feeling like an existential threat. They start feeling like a new thing to futz around with.

This mindset, I think, is critical when we start thinking about how and when to use AI in the learning process. It helps to remember that AI isn’t the first shift we’ve lived through—it’s just the latest.

What a fun way to revisit a 9 year old conversation, my idealized perspective has not changed. I am not sure if I ever convinced anyone, but I have learned the power of messing up in public, making mistakes. It blows away the need to spend energy on perfection. Making mistakes is not a big deal if you can demonstrate, via futzing or honesty, and the processes you do to counter a mistake.

I can identify with all the items in your generated image. By years I’m just on the edge of boomers but my trajectory parallels yours. No computers in home, I played a bit on one my friends dad had. My senior year we got a programming class learning gulp FORTRAN. We colored in commands with pens on punchcards and drove once a week to the one school with a main frame. I sound old.

Never bought deeply into the generation generalizations, it usually "feels" right especially matching to ones own memory. I recall when I was at Maricopa we had Bill Strauss or Neil Howe give a big talk when their Generations book was hot, at least it spawned good conversations.

Also, I am hesitant to put GenAI in the same basket as other new technologies, most everything before there was a period to explore and make choices, to choose to adopt them. The speed here is casting that to the wind.

But I am thrilled you made the effort to reach out after all these years. It is those singular experiences that I thrive on these days. Now that I am subscribed I can see what happens.

Give my regards to the Sonoran Desert!